What Does AI Remember?

Cultural Memory in the Age of Large Language Models

Every culture remembers through its media. The clay tablet selects differently than the printing press, the printing press differently than the internet. Each medium shapes its own logic of preservation, determining what gets recorded and what survives the transition to the next format. The question is always the same, even when the medium has changed entirely. What does this thing remember? And what does it forget, through the structure of its remembering?

Large language models are now confronted with this question, whether anyone intended that or not. Millions of people use them daily as interfaces to cultural knowledge. They ask about history, literature, philosophy, law. They ask an LLM to explain the Thirty Years’ War, to summarize Kant’s categorical imperative, or to compare the legal traditions of common law, customary law, and civil law. The answers come fluently and they are by now often extremely competent. They create the impression of a system that has read everything and remembers everything.

This impression, however, is wrong. The answers are often accurate enough, but the model is neither an archive nor a library. It’s a medium that produces cultural knowledge without the institutions that cultural theorists consider constitutive: no community that authorizes it, no specialists who maintain it. Jan and Aleida Assmann’s theory of cultural memory conceives of memory not psychologically but medially and institutionally (I refer to both, Jan for the institutional theory, Aleida for the media and archive analysis, and treat their work here as complementary, which it is not entirely, but productively so for my purposes). Their analytical toolkit is precise for understanding what LLMs do with cultural knowledge. And it reaches a limit, when applied to LLMs, that structures the entire argument of this essay: Assmann’s toolkit presupposes a constitutive community. LLMs, however, have none. What that means is the actual question. I use the categories that follow (canon, archive, the floating gap, active and passive forgetting) knowing that they will not map cleanly. They are diagnostic instruments developed for a world in which memory has institutional carriers. Applying them to a medium that has none is a deliberate test of their limits, and the points at which they fail are as instructive as the points at which they hold.

I first encountered Jan Assmann’s work at a colloquium I co-organized at the Centre for Cultural Studies Research Lübeck (ZKFL). There, as research fellows, we regularly invited scholars to discuss the classics of cultural studies. During Assmann’s visit, the topic was Freud’s Moses, not cultural memory. But through this encounter I began reading his work on memory, institutions, and symbolic forms, and the question that has accompanied me since is a structural one: What does a medium do with the memory it carries?1 2

Assmann’s central insight is actually quite simple. Cultural memory is not stored in minds but in media and the institutional forms that maintain them. The medium shapes what a community regards as binding. This is a structural claim about how cultures maintain continuity across generations, and it applies to temple inscriptions and liturgical calendars just as it does (this is my argument) to the statistical outputs of a neural network trained on several trillion tokens of digitized text.

If LLMs become the primary interface through which millions of people access cultural knowledge, then the question of what they remember and what they forget is not a technical question. It’s a question of cultural identity, and one that the current debate on AI safety, for all its sophistication, has barely begun to ask.

A Training Corpus Is an Act of Selection

Anyone who has worked on digitization projects knows that no database is a neutral container. I spent years digitizing museum and archive collections: 15,000 objects from a former academic museum in Göttingen, portrait collections in Kassel, cartularies from the 18th/19th centuries. The work teaches you something that no theory can convey: every field you define determines what becomes visible. Every category you choose (material, provenance, dating, creator) is itself an act of selection. A seal impression catalogued without its wax color loses a part of its history. A drawing recorded without its verso loses half its information. These are small decisions, made thousands of times, and they determine what a collection remembers.

LLM training follows the same logic, but at a scale where those decisions become invisible.

A training corpus is, structurally speaking, an act of cultural selection. Not a curated one (like a library) and not a canonical one (like a scripture), but an algorithmic one. Selection occurs predominantly by availability and language, not by relevance to a community’s self-understanding. That teams at the major providers now make deliberate decisions about data composition changes less about this than one might hope: the basic structure of the corpus is set before curation begins, and curation can shift the basic structure, but not abolish it. What enters the model is what was digitized and available in sufficient quantity, in the languages the developers prioritize. English dominates and Western academic and journalistic traditions dominate within English. Everything else gets thinner.

LLMs break with this model, in a way that puts Assmann’s categories to the test. According to Assmann, cultural memory requires specialized carriers, institutions of maintenance, a community that recognizes itself in the transmitted material. LLMs have none of this. There are no specialists of LLM memory (no bards, no priests, no archivists of the training data). There is no ritual of transmission. There is no comparable community that recognizes itself in the model’s outputs. And yet the model produces cultural knowledge, or something that looks remarkably like it. It can recite, explain, contextualize, compare. It does everything a carrier of cultural memory is supposed to do, with the exception that it does not know it is doing so and no community has authorized it to do so.

A concrete example: Ask an LLM about the Thirty Years’ War and you get a competent summary of the dates and battles, of the Peace of Westphalia, or the demographic consequences. Ask about the Herero and Nama genocide and the answer you get will tend to be more generic and more cautious. Ask about the oral traditions of the Sámi and the answer becomes thin. The model simply has less to draw on because the training data contained less. Initial empirical analyses point in the same direction: all models tested show cultural asymmetries in the same direction, though with varying intensity.3

Neither Canon nor Archive

The sharpest conceptual tool for understanding what is happening here comes from Aleida Assmann. In her essay “Canon and Archive” she distinguishes two modes of cultural memory that are entirely overlooked in most discussions of LLMs.4

The canon is small, selective, and value-laden. It preserves what Aleida Assmann calls “the past as present.”5 Canonization requires that a community ascribes binding significance to certain works and maintains this ascription across generations through reading, performance, and reinterpretation. Shakespeare holds his place in the Western literary canon not because he is frequently read (although he is), but because reading communities over centuries have decided that his work carries this significance. The canon is active memory. It is the cultural material that a society keeps in use.

The archive is large, accumulative, and de-contextualized. It preserves “the past as past.” Objects in the archive have lost their immediate addressees. They exist as potential: open to new contexts, new interpretations, new uses that no one can predict. The archive is passive memory. It is the storage from which future canons may draw, but it makes no claim about what should be drawn.

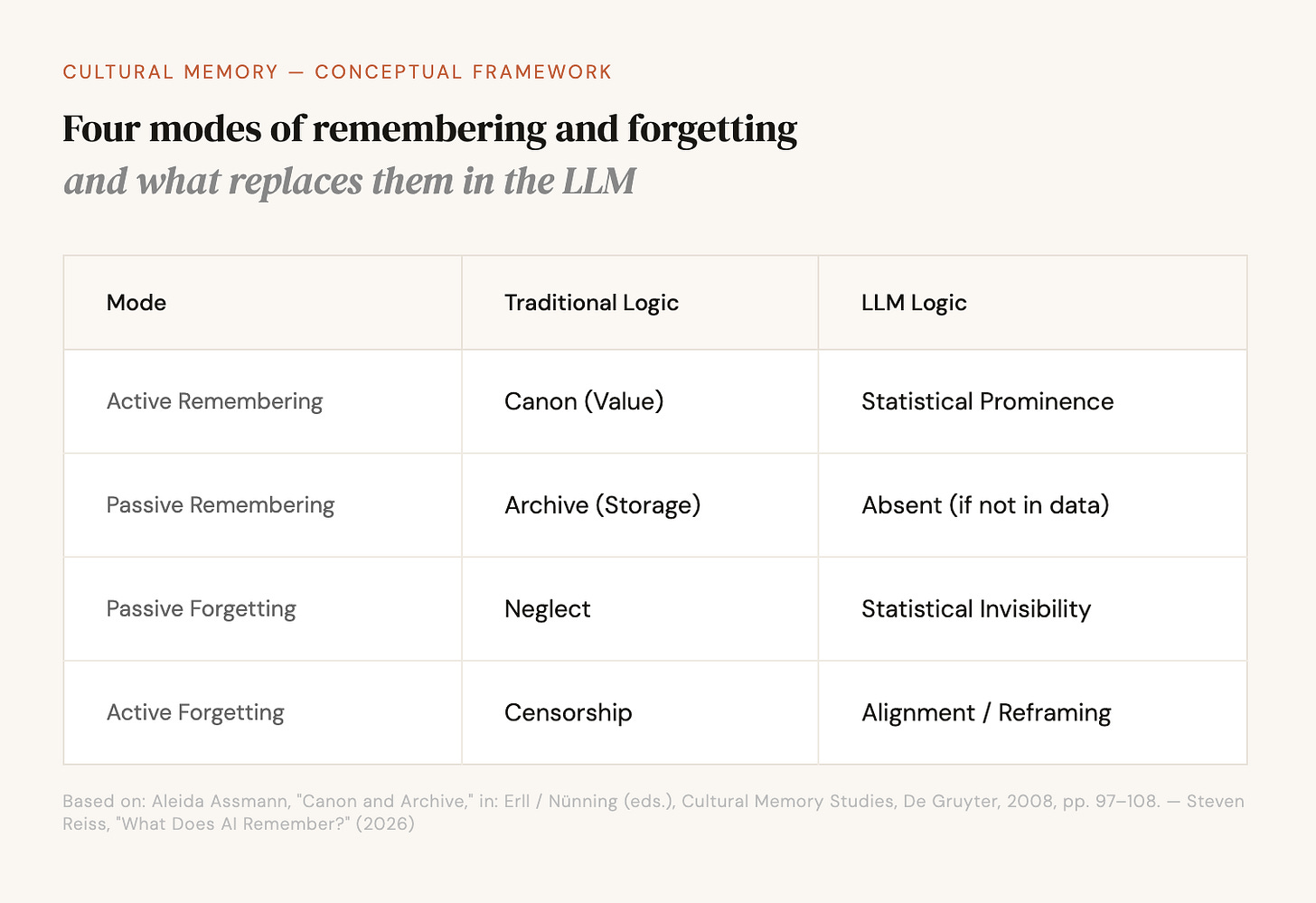

Aleida Assmann distinguishes four modes: active remembering (canon), passive remembering (archive), passive forgetting (neglect), and active forgetting (censorship, destruction).6

An LLM can be mapped onto this diagram, but in a way that collapses both main categories. Shakespeare is prominent not because a community has decided that his work carries binding significance, but because he appears millions of times in the training data. Frequency replaces judgment. The archive function (passive remembering) is practically absent: what was not in the training data does not exist as a dormant resource, it simply does not exist. (Retrieval-Augmented Generation, where a model accesses external sources, creates a functional archive layer, but changes nothing about the structural basis of what the model knows on its own.) Passive forgetting happens through non-inclusion, without intent. And active forgetting takes on a new form, to which I will return.

Active forgetting is perhaps the most interesting cell in the diagram. LLMs do possess a form of deliberate suppression: alignment training and content filtering. But this operates according to a different logic than cultural censorship. It is not about collective identity or political power in the traditional sense. It is about corporate liability and (in the best case) genuine ethical concerns about what a model should say and what it should not. The categories of suppression are new. They cannot be mapped onto the Index Librorum Prohibitorum or the desk of the Soviet censor. They point to something that does not yet have a name.

A clarification is needed here, one that makes the Assmann analogy more honest. Censorship in the historical sense operates at the level of knowledge: it erases and destroys. What a censor burns, no one knows anymore. Alignment training operates primarily at the level of speech: it shapes what the model utters, not necessarily what it represents (although the boundary between representation and utterance is in practice less sharp than this distinction suggests). A trained model can contain patterns it does not articulate because a post-training step dampens that utterance or steers it in a particular direction. This is structurally different from censorship. Post-training and fine-tuning shape model behavior substantially, but they do not undo the frequency-based selection that pretraining has established. They operate on it. What alignment introduces is a second-order judgment: it can reweight outputs, steer them, dampen them, but the material on which it operates was selected by frequency in the first place. The result is closer to what rhetoric calls framing, because content gets reweighted and does not just disappear.

The cultural memory theorist must be careful here. If alignment training does not erase but reframes, then the question is not only what the model “does not say,” but how it says what it does say. Which perspectives are presented as neutral, which are marked as controversial? These shifts are subtler than censorship and therefore harder to capture. Aleida Assmann’s distinction between active and passive forgetting was designed for a world in which memory is either present or erased. LLMs introduce a third possibility: the present that systematically sounds different than it could.

The floating gap tilts

LLMs introduce a form of cultural forgetting that fits none of the categories in the Assmanns’ work. The term I would propose is statistical invisibility.

A cultural tradition, a language, a body of knowledge that was never digitized, or digitized but underrepresented, or represented but in a language that constitutes only a tiny fraction of the training data: these undergo a forgetting that is structurally different from anything Assmann describes. No one decided that Wolof poetry should be less present in an LLM than English Romanticism. No committee made this choice and no censor intervened. The selection occurred through the structure of the internet, the economics of digitization, and the path dependencies of data collection. It is forgetting without intent.

Jan Assmann describes, following the historian Jan Vansina, for oral societies the so-called “floating gap”: a zone of silence between the recent past (communicative memory, which spans roughly eighty years, the range of living generations) and the mythical past (cultural memory, the founding narratives of origin). Between these two horizons lies a period that no one remembers, neither the living nor the tradition. This gap moves forward with each generation.7

LLMs produce a different kind of gap. The silence here is above all cultural. It surrounds traditions that were never part of the digitized, predominantly English-language, predominantly Western record that constitutes the bulk of the training corpus. The floating gap has, in this new medium, gained an additional direction: it moves not only forward through time (LLMs, too, know less about poorly documented epochs than about well-documented ones) but also laterally across cultures. The analogy is deliberately stretched: Vansina’s floating gap is a temporal phenomenon in oral societies. But the structural parallel justifies the term, for in both cases the medium itself produces a zone of systematic ignorance that remains invisible to its users. The gap separates the digitally visible from the digitally invisible, and it follows the existing inequalities of the global information economy.

In practice, a student in Dakar who asks an LLM about the philosophical traditions of the Wolof language will receive less precise information than a student in London who asks about British empiricism. The student in Dakar does not encounter a refusal but a thinning out, i. e. shorter answers, more generic phrasing, fewer names and dates and internal distinctions. The model has learned less because the training data contained less. And the training data contained less because the digital record is thinner. And the digital record is thinner because several mechanisms work together: the economic incentives of data collection (English-speaking users are the larger market), safety filters that are not calibrated for languages other than English and structurally exclude non-English content,8 and the sheer path dependency of what was digitized first. Each link in this chain is individually explicable. Together they produce a systematic cultural asymmetry in a medium that presents itself as universal.

This is where the question becomes relevant for governance. The EU AI Act, the ongoing debates about training data transparency, the question of cultural sovereignty in the digital age: these touch the same structural problem, even if they rarely name it as such. If LLMs become primary interfaces for cultural knowledge (and they will, whether regulators are ready or not), then the composition of the training data is not a technical specification but a cultural-political decision, whether it was intended as such or not.

The difficulty is that no existing category captures what is happening and there are no actors who intended the selection as a cultural one. It is a new form of cultural mediation for which neither the concepts nor the responsibilities nor the instruments exist. The question is too cultural for technology regulation and too technical for cultural authorities.

Whose Memory?

The companies building LLMs are, whether they acknowledge it or not, in the business of shaping cultural memory. Not as curators (they do not select by value), not as archivists (they do not preserve for future retrieval), but as something new: operators of a medium that produces the appearance of comprehensive cultural knowledge while structurally favoring certain traditions over others.

Jan Assmann writes that cultural memory always has its “specialized carriers.” In ancient societies these were shamans, bards, priests. In literate societies, scholars, librarians, curators. The structure of participation, Assmann notes, is “never strictly egalitarian.”9 Access to cultural memory, the ability to interpret it and to decide what counts, has always been concentrated.

LLMs introduce a paradox. They democratize access to cultural knowledge in a way no previous medium has achieved. Anyone with an internet connection can ask a question and receive an answer drawn from a corpus that no individual could read in a lifetime. But the power of selection (who decides what is in the training data, how it is weighted, what is filtered) is concentrated in a very small number of companies. This is not an entirely new observation. But Assmann’s vocabulary gives it a specific edge: the carriers of cultural memory have always been few. What is new: they are now corporations, and their selection criteria are opaque even to the communities whose memory they mediate.

One might object that this is overstated: LLMs are tools, not cultural temples, and whoever holds them responsible for mediating cultural memory is engaging in precisely the mystification that benefits the companies building them. But this objection confuses intention with effect. Google did not intend to become the arbiter of what is findable and what is not. Wikipedia did not intend to become a kind of consensus reality. Both became so through use, through billions of queries, and LLMs follow the same path, only considerably faster.

The Archive Without an Archivist

Every medium has reordered what a culture could remember and what it would forget. The manuscript, the printing press, the internet, the search engine: each created a new economy of access in which the question of what is remembered became inseparable from the question of what the medium makes visible. LLMs are the latest entry in this series, with a difference that has received too little attention. A search engine shows sources and marks its limits by what it does not find. An LLM answers with the same fluency whether it knows much or little. The thinning out is invisible. This is qualitatively new.

The Assmanns’ analytical tools (developed for ancient Egyptian temple libraries, medieval canon formation, and modern Holocaust remembrance) prove surprisingly precise when applied to a technology that did not exist when they were writing. The distinctions hold and the categories illuminate more than one would expect. And where the categories break (where LLMs do something that neither “canon” nor “archive” nor “communicative memory” can capture), that is precisely where new thinking is needed. Statistical invisibility is one such place. The collapse of the canon-archive distinction another: what Smit, Smits, and Merrill call “stochastic remembering,” memory as probabilistic artifact rather than cultural decision.10

What would it mean to take the cultural memory function of LLMs seriously? The most ambitious governance approaches in the industry aim at safety and scaling, not at cultural representativeness. They define thresholds for dangerous capabilities, anchor behavioral principles in the model itself, document what a system can and cannot do. These are serious instruments. But they address risks, not asymmetries. The question of which cultures a model remembers and which it statistically forgets lies outside their scope.

The EU AI Act has, with the GPAI provisions, created its own framework for large language models that demands transparency about training data, but this transparency obligation targets copyright compliance and systemic risks, not cultural exclusion structures.11 The EU Commission’s AI Office, which is supposed to oversee compliance with these rules, has no mandate for cultural representativeness. Good will exists, but what’s missing is an institution with the mandate to act on it. A cultural audit of the training data, conducted not by the companies themselves but by independent experts for the affected language and knowledge communities, would be a start. Aleida Assmann’s observation that archives have their own structural mechanisms of exclusion applies here precisely, with the difference that the selection principles of an archive are in principle public and contestable.12 The selection principles of an LLM training corpus are not.

Humanities scholars belong in the governance of these systems, not as advisors to be consulted and then ignored. That their expertise is still missing from AI development has been stated pointedly by eight researchers from American institutions in a preprint: the disciplinary inputs to the development of generative AI are surprisingly narrow.13

Two questions mark where this work would need to begin. First: Assmann’s concept of cultural memory is always thought functionally. It constitutes a community, gives it identity, and rewrites past into present. An LLM has no such community. Does the Assmannian toolkit apply at all to a medium that has no constitutive group, or must we fundamentally extend it? Elena Esposito has asked, from a systems-theory perspective, under what conditions communication is possible when one partner is not human. Her answer shifts the question but does not answer it for cultural memory.14 Second: What follows from this institutionally? The tools to measure cultural asymmetries exist. What is missing is a place where the results of such measurements would have consequences, an authority between technology regulation and cultural policy that does not yet exist.15 16

Institutional incentives point in a different direction: market share and the next model release. And the difficulty is genuine. A cultural audit of training data sounds like a reasonable demand until one asks who would conduct it and against what standard. Representativeness is itself a contested concept. Any authority charged with measuring cultural balance in a training corpus would bring its own selection logic, its own blind spots, its own institutional interests. The point is not that a perfect standard is achievable. The point is that the current situation, in which no standard exists and no institution is responsible, is untenable when the medium is already shaping what millions of people regard as cultural knowledge. The tools for asking this question have been available longer than most people in the AI debate realize. They were developed by scholars of ancient Egypt and the twentieth century, for other media and other memory crises. They fit this one.

Bibliography

Assmann, Aleida: “Canon and Archive,” in: Astrid Erll/Ansgar Nünning (eds.), Cultural Memory Studies: An International and Interdisciplinary Handbook, Berlin/New York: Walter de Gruyter, 2008, pp. 97–108.

Assmann, Jan: “Communicative and Cultural Memory,” in: Astrid Erll/Ansgar Nünning (eds.), Cultural Memory Studies: An International and Interdisciplinary Handbook, Berlin/New York: Walter de Gruyter, 2008, pp. 109–118.

Assmann, Jan: Das kulturelle Gedächtnis: Schrift, Erinnerung und politische Identität in frühen Hochkulturen, Munich: C. H. Beck, 1992.

Esposito, Elena: Artificial Communication: How Algorithms Produce Social Intelligence, Cambridge/London: MIT Press, 2022.

Gensburger, Sarah/Clavert, Frédéric (eds.): “Is Artificial Intelligence the Future of Collective Memory?,” in: Memory Studies Review, vol. 1, no. 2, 2024, pp. 195–208, doi: 10.1163/29498902-202400019.

Gopinadh et al.: “Regional Bias in Large Language Models,” arXiv, January 2026, arXiv:2601.16349, doi: 10.48550/arXiv.2601.16349.

Halbwachs, Maurice: Les cadres sociaux de la mémoire, Paris: Félix Alcan, 1925.

Klein et al.: “Provocations from the Humanities for Generative AI Research,” arXiv:2502.19190, preprint, updated January 2026, doi: 10.48550/arXiv.2502.19190.

Kollias, Phivos-Angelos: “Nostophiliac AI: Artificial Collective Memories, Large Datasets and AI Hallucinations,” in: Memory Studies Review, vol. 1, no. 2, 2024, pp. 292–322, doi: 10.1163/29498902-202400014.

Schuh, Julien: “AI as Artificial Memory: A Global Reconfiguration of Our Collective Memory Practices?,” in: Memory Studies Review, vol. 1, no. 2, 2024, pp. 231–255, doi: 10.1163/29498902-202400012.

Smit, Rik/Smits, Thomas/Merrill, Samuel: “Stochastic Remembering and Distributed Mnemonic Agency: Recalling Twentieth Century Activists with ChatGPT,” in: Memory Studies Review, vol. 1, no. 2, 2024, pp. 209–230, doi: 10.1163/29498902-202400015.

Stiegler, Bernard: La technique et le temps, 3 vols., Paris: Galilée, 1994–2001.

Tao et al.: “Cultural Bias and Cultural Alignment of Large Language Models,” in: PNAS Nexus, vol. 3, no. 9, September 2024, pgae346, doi: 10.1093/pnasnexus/pgae346.

Van Noorden, Richard: “Large language models are biased — local initiatives are fighting for change,” in: Nature, November 28, 2025, doi: 10.1038/d41586-025-03891-y.

Vansina, Jan: Oral Tradition as History, Madison: University of Wisconsin Press, 1985.

Jan Assmann, “Communicative and Cultural Memory,” in: Astrid Erll/Ansgar Nünning (eds.), Cultural Memory Studies: An International and Interdisciplinary Handbook, Berlin/New York: Walter de Gruyter, 2008, pp. 109–118. The concept of collective memory goes back to Maurice Halbwachs (Les cadres sociaux de la mémoire, 1925). Assmann’s theory of cultural memory distinguishes itself from Halbwachs by placing the medial and institutional dimension at the center, which remains underexposed in Halbwachs. It is precisely this dimension that makes it more productive for the analysis of LLMs.

Bernard Stiegler’s La technique et le temps (3 vols., 1994–2001) would be an alternative tool for the analysis of media and memory. I choose Assmann because his toolkit places the institutional dimension (community, carriers, ritual) at the center, which in Stiegler recedes in favor of the technical-pharmacological analysis. For the question of what LLMs do with cultural memory, this institutional perspective is the more precise lever.

Tao et al., “Cultural Bias and Cultural Alignment of Large Language Models,” in: PNAS Nexus, vol. 3, no. 9, September 2024, pgae346, doi: 10.1093/pnasnexus/pgae346; Gopinadh et al., “Regional Bias in Large Language Models,” arXiv, January 2026, arXiv:2601.16349, doi: 10.48550/arXiv.2601.16349. Tao et al. tested five consecutive GPT models and found a consistent Western cultural bias across all of them; Gopinadh et al. evaluated ten models across providers (including Claude 3.5 Sonnet, GPT-4o, Gemini 1.5 Flash) and found considerable variation in the intensity of regional bias, but not in its direction.

Aleida Assmann, “Canon and Archive,” in: Erll/Nünning (eds.), Cultural Memory Studies, pp. 97–108.

Aleida Assmann, “Canon and Archive,” p. 100.

Aleida Assmann, “Canon and Archive,” pp. 97–98.

Jan Vansina, Oral Tradition as History, Madison: University of Wisconsin Press, 1985, pp. 23–24. Jan Assmann adopts the term in: Das kulturelle Gedächtnis: Schrift, Erinnerung und politische Identität in frühen Hochkulturen, Munich: C. H. Beck, 1992, p. 50.

Cf. Vukosi Marivate, quoted in: Richard Van Noorden, “Large language models are biased — local initiatives are fighting for change,” in: Nature, November 28, 2025, doi: 10.1038/d41586-025-03891-y.

Jan Assmann, “Communicative and Cultural Memory,” p. 111.

Smit, Rik/Smits, Thomas/Merrill, Samuel, “Stochastic Remembering and Distributed Mnemonic Agency: Recalling Twentieth Century Activists with ChatGPT,” in: Memory Studies Review, vol. 1, no. 2, 2024, pp. 209–230, doi: 10.1163/29498902-202400015. Smit, Smits, and Merrill coin the term “stochastic remembering” for the process by which LLMs reproduce cultural knowledge through statistical probability. The term captures precisely what is described here as the collapse of the canon-archive distinction: memory as probabilistic artifact, not as cultural decision.

Regulation (EU) 2024/1689 of the European Parliament and of the Council (AI Act), Articles 51 ff. (GPAI models), in particular Article 53(1) (transparency obligations for providers of GPAI models). The obligation refers to a “sufficiently detailed summary” of the training data, but targets copyright compliance (Article 53(1)(d)), not cultural representativeness.

Aleida Assmann, “Canon and Archive,” pp. 97–98.

Klein et al., “Provocations from the Humanities for Generative AI Research,” arXiv:2502.19190, preprint, updated January 2026, doi: 10.48550/arXiv.2502.19190.

Elena Esposito, Artificial Communication: How Algorithms Produce Social Intelligence, Cambridge/London: MIT Press, 2022. Esposito asks under what conditions communication is possible when one partner is not human, an approach that extends the question raised here about the medium without a constitutive community from a systems-theory perspective.

Sarah Gensburger/Frédéric Clavert (eds.), “Is Artificial Intelligence the Future of Collective Memory?,” in: Memory Studies Review, vol. 1, no. 2, 2024, pp. 195–208, doi: 10.1163/29498902-202400019. The special issue includes, among others, Julien Schuh, “AI as Artificial Memory: A Global Reconfiguration of Our Collective Memory Practices?,” ibid., pp. 231–255; as well as Phivos-Angelos Kollias, “Nostophiliac AI: Artificial Collective Memories, Large Datasets and AI Hallucinations,” ibid., pp. 292–322, doi: 10.1163/29498902-202400014. Kollias distinguishes between curated and uncurated data material as the basis of what he calls “artificial collective memory,” a category that corresponds to the concept of statistical invisibility proposed here.

This essay deals exclusively with text-based language models. Multimodal models that process images, audio, and video intensify the problem described here, because visual and oral traditions come into play whose digitization gaps are even larger than those of written culture.